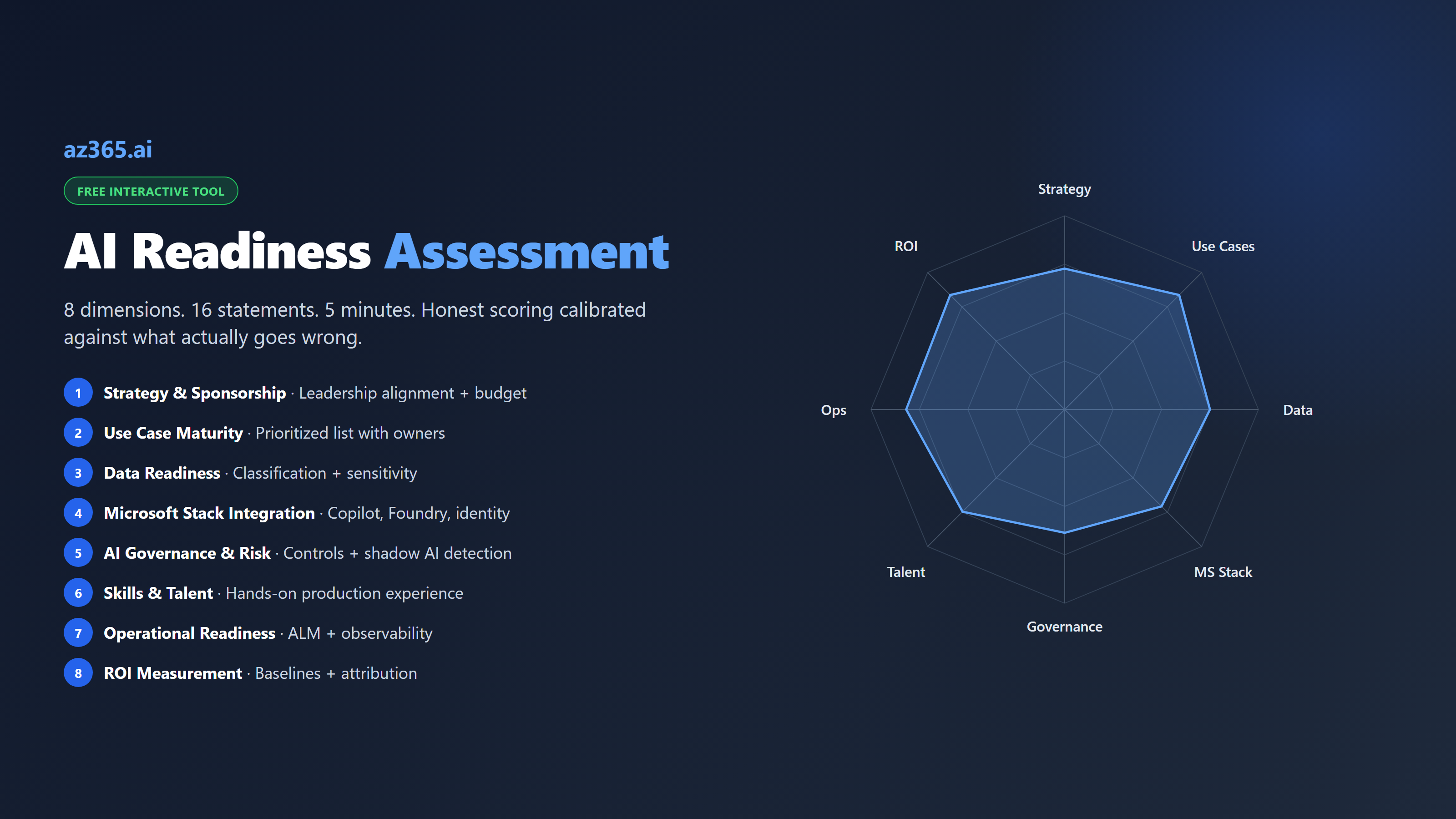

AI Readiness Assessment for Microsoft Enterprises: 8 Dimensions, Honest Scoring

Free 8-dimension AI readiness assessment for Microsoft enterprises. 16 statements, 5 minutes, calibrated against what actually goes wrong in production deployments. Microsoft-stack-specific recommendations.

Most AI readiness assessments are calibrated to sell something. The vendor’s questionnaire just happens to discover that the gap in your readiness is a product they sell. The advisory firm’s framework just happens to recommend a 6-month engagement. The free downloadable PDF just happens to require an enterprise license to act on.

This one is calibrated against what actually goes wrong. Eight dimensions, sixteen statements, five minutes. Honest answers only. The output is a maturity score, the three weakest dimensions, and a Microsoft-specific recommendation per gap. No sales pitch.

Take the assessment first, then read the rest of the article to interpret the score.

Free Tool

AI Readiness Assessment

16 statements across 8 dimensions. Takes 3-5 minutes. Honest answers only.

Your AI Readiness Score

--

--

--

By dimension

Top strengths

Biggest gaps

Recommended next steps

Want a deeper readout?

Send me a 90-minute review of your specific results, including a Microsoft-stack-specific implementation roadmap for the three biggest gaps. No sales pitch.

What “AI Readiness” Actually Means

The most common mistake in AI readiness work is treating it like cloud migration: a one-time assessment, a multi-quarter program, then “done.” AI readiness is not a state you reach. It is a posture you maintain.

The reason is that AI capability evolves faster than organizational maturity does. The frontier model that ships next quarter changes what your data needs to do, what your governance has to cover, what your skills gap looks like. An organization that scored “Strategic” on AI readiness in 2024 is “Operational” in 2026 just because the bar moved.

So the right way to read your score is not “how far we have to go” but “where the program will fail next.” A score of 60% on Operational Readiness is not a passing grade. It is a flag that says “your next production AI feature will probably ship without observability, and the audit team will find out about it in a quarter.”

The eight dimensions in the assessment above are calibrated against failure patterns documented in Microsoft-stack enterprise AI programs (Microsoft Responsible AI Standard, NIST AI RMF, public Microsoft customer stories). Each dimension is not just “do you have this” but “is this strong enough to absorb the next AI capability shift without breaking.”

Why Most AI Readiness Assessments Are Useless

Three patterns make most readiness assessments useless in practice.

Pattern 1: Generic dimensions with no operational specificity. Many frameworks ask “do you have an AI governance program?” The answer is binary in the framework, ternary in reality (yes-it-is-real, yes-it-is-a-PDF, no). Asking “can you detect shadow AI in your tenant within 7 days” is a different question entirely, and it produces an actionable answer.

Pattern 2: Vendor-shaped questions. A vendor that sells a data quality platform writes a readiness assessment that finds data quality is your gap. A vendor that sells responsible AI consulting writes a readiness assessment that finds governance is your gap. The question is not “what does the assessment say is your gap” but “what does an honest accounting of your operational reality say is your gap.”

Pattern 3: Maturity bands without specificity. Most assessments produce labels like “Beginner / Intermediate / Advanced” without telling you what changes when you cross from one to the next. The score above produces a band AND a specific concrete next action mapped to the three weakest dimensions. That is the part most assessments skip.

The eight-dimension assessment above is intentionally narrow: 16 statements, 5-point Likert scale, no industry-specific tailoring. The narrowness is the point. Broad assessments take days; nobody completes them honestly. A 5-minute assessment gets honest answers, and the dimensions are calibrated tightly enough that the gaps mean something.

The Eight Dimensions, Explained

1. Strategy & Sponsorship

Has senior leadership signed off on the AI vision, with budget? In practice this is the thing that separates programs that survive the first organizational reset from programs that die when the executive sponsor moves on. Without explicit budget for the next 12 months, every AI initiative becomes politically negotiable when budgets get tight in Q3.

Microsoft-specific signal: Look at whether your AI investments are line items in the IT budget or hidden inside other line items. Hidden investments do not survive scrutiny.

2. Use Case Maturity

A prioritized list of AI use cases tied to specific business outcomes is the difference between a program that ships and a program that runs proof-of-concepts forever. Each use case needs a named business owner accountable for value delivery. “IT owns AI” is not ownership; it is a stalled procurement project.

Microsoft-specific signal: For M365 Copilot rollouts, this means specific personas (Sales, Customer Service, Finance) with specific workflow targets, not a blanket per-seat license assignment.

3. Data Readiness

AI cannot reason over data it cannot find or classify. Most Microsoft enterprises have data spread across Dataverse, SharePoint, OneDrive, file shares, on-prem databases, and SaaS platforms. The readiness question is whether you can answer “where is the customer data, who owns it, what sensitivity label is on it” for your top 10 data sources.

Microsoft-specific signal: Microsoft Purview classification rules are the operational test. If they are deployed and producing accurate sensitivity labels, the program can scale Copilot safely. If they are not, every Copilot rollout carries data-leakage risk.

4. Microsoft Stack Integration

How well-integrated is your AI surface with the rest of the Microsoft estate? M365 Copilot deployed without conditional access policies is a different program from M365 Copilot deployed with grounding controls and identity-based scoping. The difference is in operational risk, not in license cost.

Microsoft-specific signal: Document your decision rules for Copilot vs Foundry vs direct AI provider APIs. When teams pick differently, the result is shadow AI in three different procurement categories.

5. AI Governance & Risk

The full operational governance program is covered in AI Governance Framework for Microsoft Enterprises. For the readiness assessment, the two questions that matter are: do you have a documented framework with named owners, and can you detect shadow AI within 7 days?

Microsoft-specific signal: If you scored low here, Shadow AI Governance is the fastest visibility win. 30 days to baseline inventory (based on Microsoft’s documented setup time for Defender for Cloud Apps + Power Platform CoE Toolkit).

6. Skills & Talent

Pure in-house ramps too slowly. Pure-partner stalls when contracts end. The middle path is mixing in-house upskilling with senior partner support for the first two production deployments, then graduating to majority-internal delivery.

Microsoft-specific signal: The skills gap that matters most is not prompt engineering. It is integration engineering: the developers who can wire Foundry agents into existing systems, configure Logic Apps as MCP servers, and ship production AI features through your ALM pipeline.

7. Operational Readiness

AI features should ship through the same ALM pipeline as everything else. Code review, automated tests, deployment gates, observability. The most common failure pattern is the one-off AI feature that bypasses ALM entirely because “it is just a Copilot Studio agent” or “it is just a Power Automate flow.” Those one-offs accumulate until the SOC has 50 AI features in production with no audit logging on any of them.

Microsoft-specific signal: Foundry observability + Microsoft Sentinel detection content packs are the operational floor. If they are not deployed, you are not operationally ready regardless of how mature your other dimensions look.

8. ROI Measurement

The single biggest reason AI programs lose funding is “we cannot prove ROI.” The single biggest reason teams cannot prove ROI is they did not capture baseline metrics before the rollout. Once the rollout is in flight, the comparison is “post-AI vs. memory of pre-AI” which the CFO will rightly reject.

Microsoft-specific signal: Use Power BI / Fabric for the ROI dashboard. If the AI initiative does not have a Power BI report attributing measurable productivity or cost savings to specific features within 90 days of deployment, it is at risk of defunding even if it is delivering value.

How to Read Your Score

The overall percentage is less useful than the dimension breakdown. Two organizations with the same 65% overall score can have wildly different problems: one is uniformly mediocre across dimensions (steady but unimpressive), the other has 90% on six dimensions and 30% on two (one-quarter from a serious failure).

The honest reading sequence:

- Look at the lowest-scoring dimension first. The recommendation under that dimension is the highest-leverage next action.

- Look at the spread between highest and lowest. A spread under 30 points is a balanced program. A spread over 50 points is a program with a hidden failure point.

- Compare Strategy and Sponsorship score to the rest. A high Strategy score with low operational scores is “leadership wants AI but the team cannot deliver.” A low Strategy score with high operational scores is “the team is shipping but leadership is not aligned.” Both are unstable, in different ways.

What to Do With the Results

For any score below 50% overall: focus the program on the three weakest dimensions for the next 90 days. Do not spread effort across all eight; you will not move any of them enough.

For 50-70%: you are most likely “Operational” in the maturity model. The work is closing the consistency gap. Pick the dimension where the inconsistency hurts most (usually Operational Readiness or AI Governance & Risk) and standardize.

For 70%+: the program is in good shape. The risk is no longer execution, it is keeping pace as AI capabilities evolve. Add a quarterly readiness review to the cadence. Re-run this assessment every quarter and watch for dimension scores that drift down even when nothing structurally changed; that is a sign the bar moved.

Microsoft-Specific Interpretation

Two patterns I see consistently in Microsoft-shop assessments:

Strong Microsoft Stack Integration, weak ROI Measurement. This is the “we are running Copilot at scale but cannot prove it is working” pattern, documented in Microsoft customer stories like Vodafone and Dentsu where measurement was bolted on after rollout. The fix is going back and capturing baseline metrics for the workflows Copilot is supposed to improve, then reverse-engineering ROI from current state. Power BI / Fabric is the right tool. Three months of data collection produces a defensible ROI story.

Strong Use Case Maturity, weak AI Governance. The “we have great use cases shipping fast and no oversight” pattern. The fix is implementing the six-component governance framework without disrupting the use cases that are working. Add the controls in the order: Inventory > AIBoM > Audit > Approval Gates > Data Residency > Incident Response. The first three can ship in 30 days without slowing existing programs.

What This Tells You About AI Programs

Three observations from the patterns this assessment is calibrated against:

First: most AI readiness gaps are visible within 5 minutes if you ask the right questions. The 16 statements above are not magic. They are calibrated against patterns that recur across Microsoft enterprises. If your score makes you uncomfortable, the discomfort is information; do not rationalize it away.

Second: a high score on Strategy without a high score on Operations is not readiness, it is wishful thinking. Leadership alignment on AI is necessary but not sufficient. The program ships through the same ALM pipeline that ships everything else, or it does not ship.

Third: AI readiness is the multiplier on every Microsoft AI investment. Two organizations buying the same Copilot license get different value because one has the readiness foundation and the other does not. The readiness work is the multiplier; the license is the input. Skipping the readiness work is the most expensive shortcut in enterprise AI.

Related Reading

- AI Governance Framework for Microsoft Enterprises: Operational Controls That Ship - Component 1 in the readiness assessment is governance; this is the framework

- Shadow AI Governance for Microsoft Enterprises: Discovery to Control - the operational deep-dive on detecting shadow AI in 30 days

- AI Copilots vs Custom AI on Azure: Build vs Buy - the build/buy decision for the Microsoft Stack Integration dimension

The 8-dimension scoring above is designed to be self-administered. Run it against your stack, identify the three biggest gaps, and start there.

Stay in the loop

Get new posts delivered to your inbox. No spam, unsubscribe anytime.

Related articles

AI Engineering Productivity ROI: Tokens vs Closed Tickets

Vendor AI dashboards count tokens. The CFO counts closed tickets. A framework with cited inputs and an illustrative ROI calculator for the AI engineering operating model.

Microsoft AI Certifications in 2026: Which Ones Actually Matter

Microsoft is retiring AI-900, AI-102, and DP-100 by June 2026. Here is the full replacement map and which new AI certifications are worth your time.

What Are Azure AI Services in 2026? A Complete Overview

Azure AI Services in 2026 explained: five core services, the RAG pattern that works, and how to pick the right one for your project.