Topical cluster · for IT directors, compliance leaders, and AI risk officers

AI Governance & Risk

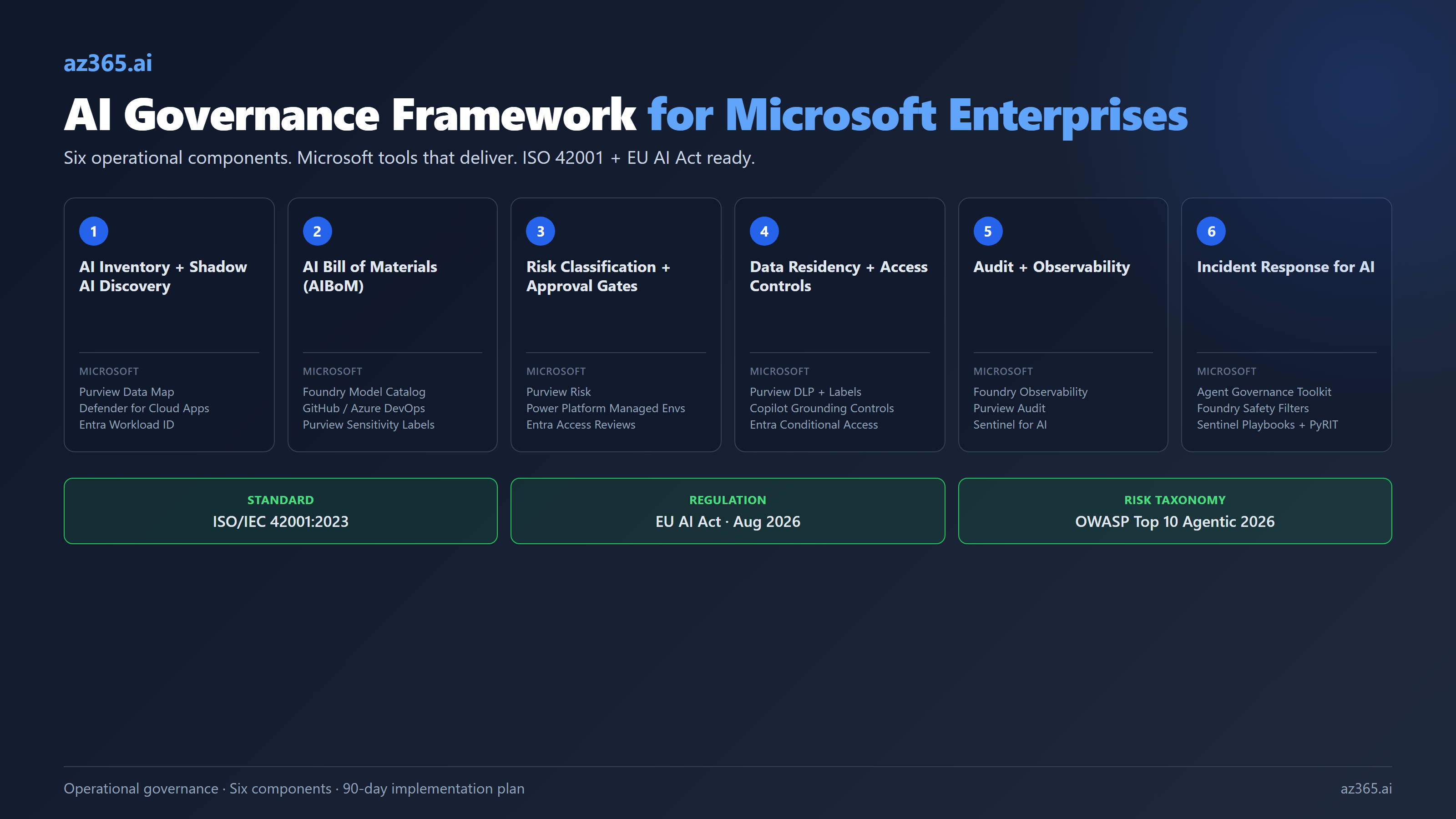

AI governance has two failure modes: do nothing and end up with shadow AI you cannot see, or do too much and end up with a policy nobody can deploy against. The middle path is operational governance: rules tied to the platform you actually run, gates that fire automatically, and inventories that update themselves.

Articles

2

This cluster covers AI-specific governance for the Microsoft stack: ISO 42001 implementation, shadow AI discovery and controls, AI bills of material, model risk frameworks, data residency, and responsible AI implementation as a working program rather than a slide deck.

If you are the IT director responsible for "we have AI governance" and the answer cannot be a PDF nobody reads, start here.

Latest in this cluster

What this cluster covers

Subtopics in ai governance & risk

- ISO 42001 implementation on Microsoft stacks

- EU AI Act readiness (high-risk system controls, August 2026 deadline)

- Shadow AI discovery (Defender for Cloud Apps, Power Platform CoE)

- AI Bill of Materials (AIBoM) templates

- Microsoft Purview + Sentinel for AI audit and observability

- Risk classification and approval gates wired to managed environments

More in this cluster (1)

Common questions

AI Governance & Risk FAQ

The questions that come up most often in ai governance & risk engagements. Answers grounded in Microsoft documentation and field experience.

Do I need a separate AI governance policy or can I extend my existing GRC framework?

Extend your existing GRC framework. AI-specific risks (prompt injection, training data provenance, model drift) layer on top of standard data governance, not next to it. ISO 42001 is explicitly designed to integrate with ISO 27001 if you already certify there.

How do I find shadow AI in my tenant?

Three discovery surfaces: Microsoft Defender for Cloud Apps for SaaS AI tools, Power Platform CoE Toolkit for AI features inside flows and apps, and Entra Workload Identity inventory for service principals making OpenAI or Anthropic API calls. Most enterprises that run a discovery sweep find 30-200 shadow AI systems they did not know existed.

What does the EU AI Act require by August 2026?

Full enforcement of high-risk system rules: risk management across the AI lifecycle, data governance for training data quality and bias, technical documentation (the AIBoM), logging for traceability, human oversight measures, and conformity assessment before placing on the market. ISO 42001 is the de facto operational evidence framework.

Can Microsoft Purview alone replace a third-party AI governance platform?

For Microsoft-stack workloads, mostly yes. Purview Data Map plus DSPM for AI plus Audit covers about 80% of typical AI governance requirements on Microsoft tenants. The 20% gap (deep model evaluation, multi-cloud AI inventory, specialized red-teaming) usually warrants a third-party tool, but you do not need it on day one.

Other clusters

AI Cost & ROI

For ctos.

AI Strategy & Readiness

For ctos.

AI Architecture

For it directors.

Microsoft Copilot Governance

For it directors.

Copilot Adoption & Recovery

For it directors and execs running copilot programs that are not converting to value.

Power Platform Governance & Operations

For power platform admins.

Architecture Documentation & Diagramming

For solution architects and senior engineers who own architecture documentation.