AI Governance Framework for Microsoft Enterprises: Operational Controls That Ship

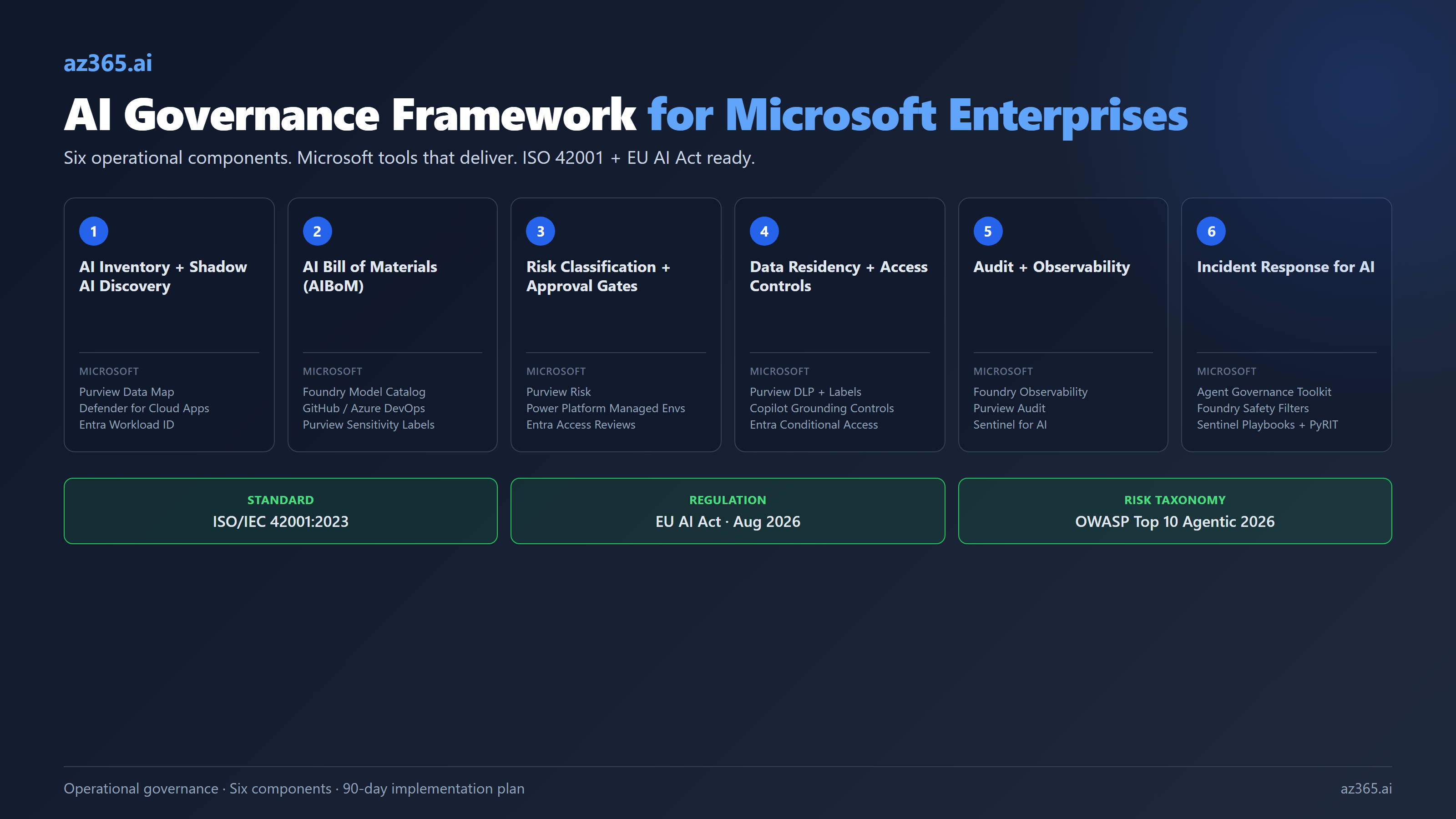

An operational AI governance framework for Microsoft stacks. Six components, ISO 42001 alignment, EU AI Act readiness, and the Microsoft tools (Purview, Entra, Foundry, Agent Governance Toolkit) that make it work.

Most AI governance frameworks are PDFs. Two hundred pages of principles, three pages of policy, zero working controls. The IT director gets handed the document, schedules a workshop to “operationalize the framework,” and six months later the only thing that has shipped is a slide deck that mentions “responsible AI” eleven times.

This is not what governance looks like. Governance, when it works, is a set of automatic gates wired into the systems that already run the business. It is the agent identity that gets revoked when an employee leaves. The DLP policy that blocks the high-risk connector before the user finishes the workflow. The Foundry trace that tells the audit team which tenant invoked which model with which prompt at 2am. It is not a slide. It is a control.

Microsoft sells most of the tools you need: Purview for data governance, Entra for agent identity, Foundry for observability, Agent Governance Toolkit for runtime compliance grading. What Microsoft does not sell is the framework that ties them together. That gap is where most AI governance programs fail, and it is what this article fixes.

Why Most AI Governance Programs Fail

Three failure modes account for almost every stalled AI governance program I have audited.

Failure mode 1: Policy without controls. The legal team writes a 40-page Responsible AI policy. It says employees must use AI ethically, must not feed customer data to non-approved models, must classify AI risk before deployment. None of this is enforced anywhere except in the document itself. Six months later, the audit reveals 200 unsanctioned ChatGPT subscriptions on company cards and a Power Automate flow that emails customer financial data to an unapproved Anthropic API endpoint. The policy did not lose. The policy was never connected to anything.

Failure mode 2: Controls without ownership. IT enables Microsoft Purview, configures DLP policies on the M365 Copilot rollout, and sets up Foundry observability. Nobody owns the alerts. The Purview audit log fills up with sensitive data classifications nobody reviews. The Foundry trace flags a prompt injection attempt at 3am and the alert goes to a team mailbox that nobody monitors. Tools exist. Operations do not.

Failure mode 3: The framework is too big to start. The team reads NIST AI RMF, Big-4 advisory whitepapers, and the EU AI Act and tries to build a single program covering all of them. Two quarters in, scope creep has the program covering 47 controls, the implementation roadmap is 18 months, and nothing has shipped. Better to ship six controls in 90 days and add the rest in increments.

The fix for all three is operational governance: small, owned, automatic. Six components, mapped to the Microsoft tools you already have or can buy, each with a named owner and a measurable signal.

The Six Components of a Working Framework

| Component | What it does | Microsoft tool that delivers it | Owner role |

|---|---|---|---|

| 1. AI Inventory + Shadow AI Discovery | Knows every AI system in use: sanctioned and unsanctioned | Purview Data Map + Entra Workload Identity + Defender for Cloud Apps | IT Director / CoE Lead |

| 2. AI Bill of Materials (AIBoM) | For each AI system, lists the model, training data, prompts, dependencies, and evaluation results | Foundry model catalog + custom AIBoM templates | AI Architect / Lead Engineer |

| 3. Risk Classification + Approval Gates | Routes AI initiatives to the right governance path: self-serve, IT-approved, board-approved | Purview Risk + Power Platform managed environments + Entra access reviews | Risk Officer / Compliance Lead |

| 4. Data Residency + Access Controls | Ensures AI sees only the data it should, in the region it should | Purview DLP + Sensitivity Labels + Copilot data grounding controls + Entra Conditional Access | Compliance / Privacy Lead |

| 5. Audit + Observability | Captures every AI inference, tool call, and decision for review | Foundry observability + Purview Audit + Sentinel for AI | Security Operations / SOC |

| 6. Incident Response for AI | Red team, jailbreak detection, kill switch when an AI system goes wrong | Microsoft Agent Governance Toolkit + Foundry safety filters + Sentinel playbooks | Security Incident Lead |

Six components. Six owners. Each has a Microsoft product that does most of the work. That is the framework.

The rest of this article walks through each component with what it actually means, what to configure, and what the failure mode looks like when you skip it.

Component 1: AI Inventory + Shadow AI Discovery

You cannot govern what you cannot see. Most enterprises think they have 5 to 10 AI systems. The inventory reveals 30 to 200, depending on size.

What to build:

- A central registry of every sanctioned AI system: model, owner, business purpose, data classification, deployment environment, last review date.

- Active discovery for shadow AI: Defender for Cloud Apps to detect SaaS AI tools (ChatGPT, Claude direct, Perplexity, etc.). Power Platform CoE Toolkit inventory for AI features inside flows and apps. Entra Workload Identity inventory for service principals making OpenAI / Anthropic API calls.

- A monthly “drift report”: new shadow AI detected, sanctioned systems that drifted out of compliance, decommissioned systems that still hold credentials.

Microsoft tools:

- Microsoft Purview Data Map for data flow visibility (which datasets are reaching which AI systems).

- Defender for Cloud Apps for SaaS AI discovery (the unsanctioned consumer AI side).

- Entra Workload Identity + Conditional Access for workload identities for service-principal AI access.

- Power Platform CoE Toolkit if Power Platform AI is in scope.

The failure mode if you skip this: Six months in, the SOC discovers a Power Automate flow that has been emailing customer PII to a free-tier Anthropic API endpoint for the last 90 days. Nobody knew it existed. There was no policy to violate because there was no inventory to register against.

Component 2: AI Bill of Materials

Borrowed from the SBOM (Software Bill of Materials) concept, the AIBoM is a per-AI-system disclosure of what is inside.

What an AIBoM contains:

| Field | Example |

|---|---|

| System name | Customer Support Triage Copilot |

| Owner | Customer Operations Director |

| Model(s) used | Azure OpenAI gpt-5, Claude Sonnet 4.6 (fallback) |

| Training data sources | None (inference only); RAG corpus = 12,400 KB articles from /support/kb |

| System prompt | Linked to repo path |

| Evaluation results | Foundry agent evaluation: groundedness 0.91, harm under 0.5%, last run 2026-04-20 |

| Risk class | Medium (customer-facing, no PII exposure, no financial outputs) |

| Compliance scope | ISO 42001 §6.1.4 risk treatment; EU AI Act limited-risk transparency obligation |

| Last review | 2026-04-15 |

Microsoft tools:

- Azure AI Foundry model catalog as the model-registry source of truth.

- Foundry evaluation runs as the recurring quality signal.

- GitHub or Azure DevOps repos for system prompt versioning and the AIBoM YAML/JSON itself.

- Purview Sensitivity Labels applied to the AIBoM so it is audit-discoverable.

The failure mode if you skip this: Auditor asks “which model does the support triage agent use, and when did you last evaluate it for harm?” Three engineers and the operations director cannot give a consistent answer. Six weeks of cleanup work follows.

Component 3: Risk Classification + Approval Gates

Not every AI initiative needs board approval. Most need almost no approval. The framework has to route initiatives to the right governance path or it becomes a bottleneck nobody respects.

A working three-tier classification:

| Risk class | Examples | Approval path | Controls required |

|---|---|---|---|

| Low | Internal productivity (M365 Copilot for individuals), draft generation, code completion, internal chat over public docs | Self-serve with guardrails (DLP, conditional access, license assignment) | Purview DLP, M365 Copilot grounding controls, no PII flows |

| Medium | Customer-facing agents on internal data, RAG over confidential corpus, agent-to-agent workflows inside the tenant | IT review + AIBoM + Foundry evaluation gates | Foundry agent evaluation thresholds, Sentinel monitoring, named owner |

| High | Decisions affecting customers (credit, hiring, healthcare), agent-to-external-system actions, AI in regulated workflows | Risk Officer + Legal + Compliance review; board notification for new high-risk | Full ISO 42001 Annex A controls, EU AI Act high-risk obligations, red team + incident response plan |

The point of the tiering is to make low-risk initiatives frictionless and high-risk initiatives genuinely reviewed. A flat policy that requires a 40-page review for every Copilot use case loses to shadow IT. A flat policy that requires no review for high-risk credit decisions loses to the regulator.

Microsoft tools:

- Power Platform managed environments to enforce DLP and connector limits per environment tier.

- Entra Conditional Access + access reviews for the service principals running medium and high-risk agents.

- Purview Risk Management for the workflow that escalates an initiative from one tier to the next.

Component 4: Data Residency + Access Controls

AI sees what you let it see. Two failure modes here: too restrictive (Copilot is enabled but it cannot find anything useful, adoption stalls) and too permissive (Copilot answers questions using HR salary data the asker should not see).

What to configure:

- Sensitivity labels on every dataset, applied automatically via Purview classification rules.

- M365 Copilot grounding controls: explicitly designate SharePoint sites, Teams, and M365 groups that Copilot can or cannot ground responses on. (Microsoft expanded this control significantly in 2026.)

- Purview DLP policies on Power Platform connectors and Foundry agents, blocking high-sensitivity data from low-tier AI systems.

- Region pinning for high-residency workloads: Foundry deployments restricted to the regions your contracts permit. (Note: Claude on Foundry only deploys in East US 2 and Sweden Central. See Claude on Azure: The Marketplace Billing Trap for why this matters for governance scope.)

Microsoft tools:

- Purview Information Protection (sensitivity labels, classification, DLP).

- Microsoft 365 Copilot data grounding controls.

- Entra Conditional Access with location and device conditions for AI workload identities.

Component 5: Audit + Observability

Without audit logs, an AI program cannot answer the basic governance question: “what did the AI do, and when, for whom, with what data?”

What to capture, in three layers:

| Layer | What it logs | Microsoft tool |

|---|---|---|

| Application | User prompt, response, tool calls invoked, latency | Foundry observability, Application Insights |

| Data | What sources the model accessed, which sensitivity labels were touched | Purview Audit, Purview eDiscovery |

| Identity | Which user or service principal invoked which model, with what conditional access state | Entra sign-in logs, Defender for Identity |

The cross-cutting tool: Microsoft Sentinel for AI, which correlates the three layers and runs detection rules. The OWASP Top 10 for Agentic Applications (published 2026) maps directly into Sentinel content packs as detection logic.

Concrete signals to alert on:

- Prompt-injection patterns hitting a customer-facing agent

- Output containing data outside the user’s sensitivity label scope

- Service principal making OpenAI API calls outside business hours from non-corporate IP

- Agent tool-call rate spikes (potential runaway loop)

- Foundry evaluation drift (groundedness drops below threshold week-over-week)

The failure mode if you skip this: Auditor asks “show me everything Copilot did with customer financial data in Q3.” You have nothing. Or you have raw logs in 14 different systems and no way to correlate them.

Component 6: Incident Response for AI

When an AI system goes wrong, the response cannot be “we will look into it on Monday.” Operational governance includes a tested response plan.

What to have ready:

- Red team scope: monthly or quarterly adversarial testing of medium and high-risk AI systems. Microsoft’s PyRIT (open source) automates a lot of this.

- Jailbreak detection: Foundry safety filters at the model level + Sentinel correlation rules at the audit level.

- Kill switch: a documented procedure to disable an AI system in under 15 minutes. For Foundry agents, this is a deployment-state change. For Copilot, it is a license assignment removal. For Power Platform AI features, it is a DLP policy promotion to “block.”

- Communication template: who gets told (legal, customer team, regulator if EU AI Act high-risk), in what order, with what severity classification.

Microsoft tools:

- Microsoft Agent Governance Toolkit (open source, April 2026) for runtime security and automated compliance grading.

- PyRIT for adversarial testing.

- Foundry safety filters + Sentinel playbooks for detect + respond.

ISO 42001: Your Scope, Not Your Plan

ISO/IEC 42001:2023 is the world’s first AI management system standard. Microsoft Azure AI Foundry Models, Microsoft Security Copilot, and M365 Copilot have all certified against it. The standard does not give you a framework. It gives you a measurable scope.

What ISO 42001 requires (loosely):

- A documented AI management system (the six components above are most of it)

- Risk treatment based on AI-specific risks (the OWASP Top 10 for Agentic Applications maps closely)

- Continuous improvement loop (the audit + observability layer feeds this)

- Senior leadership accountability (the risk classification + approval-gates component, escalated)

Why it matters even if you do not certify: EU AI Act high-risk obligations take effect August 2026. Customers will increasingly require ISO 42001 certification (or equivalent evidence) from vendors as a procurement gate. If you are selling AI-enabled software into regulated industries, you will be asked. Implementing the framework now means you are 70% of the way to certification when you decide to pursue it.

Microsoft’s certification of its own AI products is a meaningful signal. It also means that when you build on top of Foundry, Copilot, or Security Copilot, you can inherit some of the controls. Your AIBoM should explicitly note where you are leveraging Microsoft’s certified controls vs implementing your own.

EU AI Act: What Changes August 2026

The EU AI Act is in force, and high-risk AI obligations take effect August 2026. If you operate in the EU, sell to EU customers, or process EU residents’ data with AI, this matters.

What “high-risk” means in practice:

- AI used in employment decisions (hiring, performance, dismissal)

- AI used in essential services (credit, insurance, education)

- AI used in critical infrastructure

- AI used in law enforcement, migration, or judicial proceedings

- Biometric identification systems

What high-risk obligations require (selected):

- Risk management system across the AI system lifecycle

- Data governance covering training data quality and bias

- Technical documentation (read: AIBoM)

- Logging that allows traceability of AI decisions

- Human oversight measures

- Accuracy, robustness, and cybersecurity controls

- Conformity assessment before placing on the market

If your AI inventory has any high-risk systems, the August 2026 deadline is your forcing function. The six-component framework above is the implementation. Microsoft Agent Governance Toolkit explicitly maps to EU AI Act, HIPAA, and SOC2 in its compliance grading.

A 90-Day Implementation Plan

| Days | Focus | Deliverable |

|---|---|---|

| 1-15 | Inventory | Working AI inventory (sanctioned + shadow), Defender for Cloud Apps deployed, Purview Data Map mapping confirmed |

| 16-30 | AIBoM | Template defined, top 10 highest-risk AI systems documented, repos established |

| 31-45 | Risk classification | Three-tier policy approved, approval workflows wired into Power Platform managed environments + Entra access reviews |

| 46-60 | Data + access | Sensitivity labels rolled, Copilot grounding controls configured, DLP policies promoted |

| 61-75 | Observability | Foundry observability + Purview Audit + Sentinel detection content packs deployed and named owners assigned |

| 76-90 | Incident response | Red team run on top 3 systems, kill switch tested, communication template approved |

This is aggressive. A realistic enterprise lands in 120-180 days for the first cycle, then accelerates as the team learns the toolchain. The point of the 90-day plan is to set the direction. Even partial completion in 90 days beats a complete framework on paper that nobody implements.

What This Tells You About AI Governance Architecture

Three takeaways for the IT director planning a 2026 governance program.

First: stop looking for the perfect framework. NIST AI RMF, ISO 42001, EU AI Act, and Big-4 advisory frameworks all converge on roughly the same operational components. Pick one (ISO 42001 if you might certify, NIST AI RMF if you operate under US federal scope), implement the six components against it, iterate. The differences between frameworks are smaller than the gap between “policy on paper” and “controls in production.”

Second: Microsoft’s tooling is good enough to start. Purview + Entra + Foundry + Agent Governance Toolkit covers 80% of the controls a typical enterprise needs. The remaining 20% (deep model evaluation, specialized red-teaming, multi-cloud AI inventory) often requires third-party tools, but you do not need them on day one. Implement what Microsoft ships, then decide where the gaps actually hurt.

Third: governance accelerates AI adoption, it does not slow it down. The teams I have seen succeed with M365 Copilot at scale all had a working governance program before the rollout. The teams that stalled were the ones treating governance as a Q4 afterthought. Counterintuitively, when employees know there is a clear approval path for medium-risk AI use, they bring more ideas forward, not fewer. Shadow AI thrives in ambiguity.

Related Reading

- Claude on Azure: The Marketplace Billing Trap - the third-party model billing rules that affect AI procurement governance scope

- Azure OpenAI PTU vs PAYG: The Real Break-Even Table - cost governance and procurement category implications

- AI Copilots vs Custom AI on Azure: Build vs Buy - how the build/buy decision affects which controls you inherit vs implement

The six-component framework above is the structure to use when designing a Microsoft AI governance program. The supporting articles in this cluster cover each component in more depth.

Stay in the loop

Get new posts delivered to your inbox. No spam, unsubscribe anytime.