Claude on Azure: The Marketplace Billing Trap

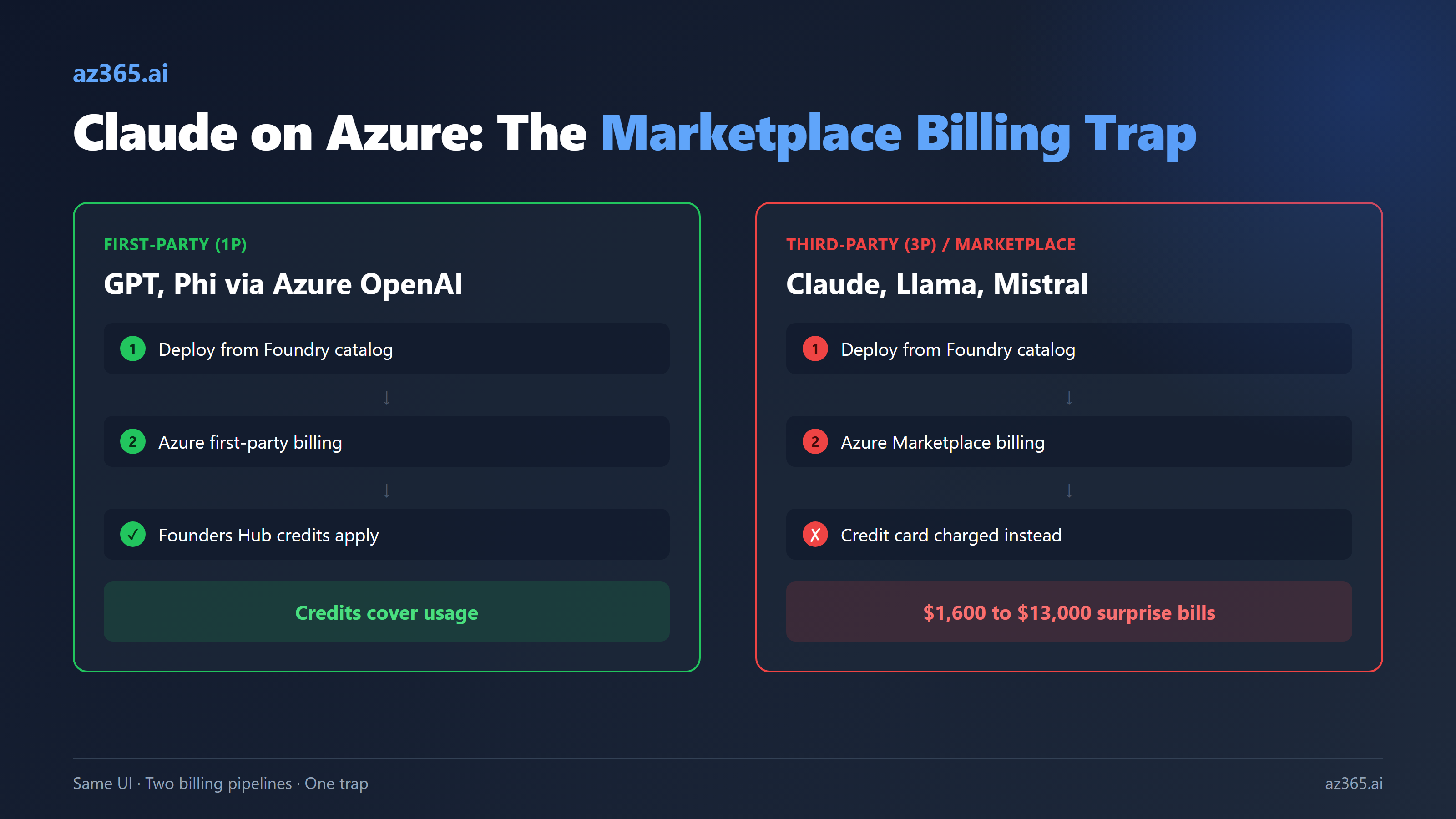

Three startup founders reported $1,600 to $13,000 in surprise Claude bills on Azure. Microsoft locked the Q&A thread. Here is exactly how the marketplace billing model breaks startup credits, where Claude Opus 4.7 actually deploys, and what architects must verify before going live.

In March 2026, a Microsoft for Startups founder published a cautionary tale. He had Founders Hub credits. He deployed Claude Opus on Azure AI Foundry. He shipped a feature. The first invoice was ¥237,081 (about $1,600 USD), charged directly to his credit card. The startup credits had not covered a single Claude token.

He was not alone. A second Japanese founder racked up over ¥2,000,000 (about $13,000) in Claude charges in one month. A German founder hit €999.60. By the time a Change.org petition went up on March 11, 2026, thirty-five founders had signed it. Microsoft’s own Q&A thread documenting the issue, posted by an affected EU founder in January 2026, was locked by Microsoft shortly after additional victims added their accounts. It remains locked today.

Microsoft’s response: “We listen closely to customer feedback.” Anthropic’s response: “We have no visibility into Azure billing.” Both confirmed in writing that nobody was accountable.

This is not a UI bug. It is the Marketplace billing model working as designed, and it is the single most important thing to understand before you deploy any third-party model on Azure, including Claude, Llama, Mistral, Cohere, or anything else that did not originate inside Microsoft.

What Actually Happened to the Founders

Three founders, three subscription types, three different invoice sizes. Same root cause.

The Japanese founder enrolled in Microsoft for Startups Founders Hub and received roughly $150,000 in Azure credits. He used Foundry to deploy Claude Opus. From the Foundry catalog UI, Claude appeared next to GPT-5 and Phi with the same “Deploy” button, the same Endpoint and Key ceremony, the same dashboard. Behind the scenes, two completely different billing pipelines were running: GPT through Azure first-party billing where Founders Hub credits applied, and Claude through Azure Marketplace where they did not. He did not know this until the invoice arrived. His direct quote, captured in the petition:

The UI makes no distinction between credit-covered and Marketplace-billed models.

The credit-card trap behind this is documented openly in Microsoft’s own subscription rules but easy to miss. From the official Foundry Claude deployment doc:

If you have an account with a credit card on file, the credit card will be charged instead of Azure Credits.

So even on a sponsored subscription with $150,000 in unspent credits, the credit card on file gets charged the moment a Marketplace product runs. The credits sit untouched. The card bleeds.

After the bills arrived, the support spiral began. Microsoft told founders to ask Anthropic for a refund. Anthropic told them they had no visibility into Azure billing. Both confirmed in writing. The thread the EU founder opened on Microsoft Q&A in January 2026 was locked by Microsoft after additional founders piled on. The Change.org petition launched March 11. The Register covered it on March 13. Microsoft’s only public response remained the boilerplate quote about feedback. The bills stood.

This is not a one-off UX bug. It is the fundamental architecture of how Azure resells third-party AI on its cloud, and the customer-relations response makes it clear that the design is unlikely to change soon.

Why Founders Hub Credits Do Not Cover Claude

The Microsoft Azure for Startups credit terms include a clause that almost nobody reads:

Azure credits can’t be used for non-Azure products, products purchased through Microsoft Azure Marketplace, or Microsoft Support Plans.

And separately, in the Foundry Claude deployment requirements:

The following subscription types are currently not supported: Enterprise Accounts located in South Korea, Cloud Solution Provider subscriptions, Azure subscriptions that don’t have an active pay-as-you-go billing method (for example, student, free trial, or startup credit–based accounts), Sponsored subscriptions that only use Azure credits.

Three of those four exclusions hit Claude directly. Claude is third-party branded (it is an Anthropic product). It is sold through Azure Marketplace. Sponsored credit-only subscriptions are explicitly excluded. So Founders Hub credits cannot cover Claude usage by three independent rules at once.

This is not a Claude-specific issue. The same applies to:

- Llama models from Meta

- Mistral models on the Mistral Marketplace listing

- Cohere models

- Stability AI image models

- Any other Models-as-a-Service offering that ships with a partner brand

If you are running on credits, the only AI models you can use without surprise charges are first-party Microsoft services: Azure OpenAI (GPT family, o-series), Phi from Microsoft Research, and other Microsoft-published models in Foundry. Everything else is Marketplace, regardless of how seamless the deployment UI feels.

The 1P vs 3P Decision Tree on Foundry

Foundry’s model catalog mixes first-party (1P) and third-party (3P) models in the same UI without much warning. The architectural decision tree before picking a model:

| Question | 1P (Azure OpenAI, Phi) | 3P / Marketplace (Claude, Llama, Mistral) |

|---|---|---|

| Billing pipeline | Azure first-party billing | Azure Marketplace |

| Founders Hub / startup credits cover it | Yes | No |

| Sponsored / MSDN / Visual Studio credits cover it | Yes | No |

| Free trial credits cover it | Yes (subject to limits) | No |

| Enterprise Agreement (EA) coverage | Yes (any geo) | Yes, EXCEPT EA accounts in South Korea |

| MCA-E coverage | Yes | Yes |

| CSP subscription support | Yes | Not supported |

| Pay-as-you-go subscription | Yes, no friction | Not currently - quota is zero by default |

| Default quota for new subscriptions | Usually allocated for OpenAI | Zero RPM, zero TPM until ticketed |

| Sponsored sub with credit card on file | Credits used first | Credit card charged immediately |

| Available regions | Many, varies by model | East US 2 and Sweden Central for Claude |

| Procurement category | Azure spend (cloud committed spend) | Marketplace spend (separate budget line) |

The last row catches most enterprises. In a large company, “Azure spend” and “Azure Marketplace spend” are tracked separately, may sit in different budgets, are governed by different procurement teams, and have different approval thresholds. A team with full Azure budget authority may have zero authority to approve Marketplace purchases. The UI does not enforce the distinction. Finance does.

Where Claude Actually Deploys

As of April 2026, Microsoft Foundry supports a substantial Claude lineup. From Microsoft’s own deployment documentation:

| Model | Status | Context window | Notes |

|---|---|---|---|

| claude-opus-4-7 | Preview (released April 16, 2026) | 1M tokens, 128K max output | Most capable Opus, adaptive thinking only |

| claude-opus-4-6 | Preview | 1M tokens, 128K max output | Production-stable Opus generation |

| claude-opus-4-5 | Preview | 200K tokens, 64K max output | Older Opus, smaller context |

| claude-opus-4-1 | Preview | 200K tokens | Legacy, prefer 4.6 or 4.7 |

| claude-sonnet-4-6 | Preview | 1M tokens, 128K max output | Frontier intelligence at scale, 1M GA |

| claude-sonnet-4-5 | Preview | 200K standard | 1M context beta retires April 30, 2026, migrate to 4.6 |

| claude-haiku-4-5 | Preview | 200K tokens | Speed and cost optimization |

| claude-mythos-preview | Gated research preview | 1M tokens, 128K max output | Cybersecurity, autonomous coding, long-running agents |

All Claude models on Foundry are deployment-type “Global Standard” only. There is no PTU, no provisioned throughput, no DataZone yet (US DataZone is on the roadmap). Two regions support deployment, period:

- East US 2 (the primary region, most stable)

- Sweden Central (added later, currently flakier in practice)

If your data residency requirements pin you to West Europe, UK South, Australia East, Germany West Central, or any other region, Claude on Azure is not an option. You either deploy via the Anthropic API directly (different data flow, different DPA), via AWS Bedrock (broader region coverage), or pick a different model.

There is one more wrinkle. Anthropic enforces its own “Supported Regions Policy” on top of Azure’s regional deployment. Even if your Azure tenant is in a supported deployment region, Anthropic may block the model based on the geography of the user invoking it. Verify both layers.

The Sweden Central availability also comes with an active failure mode. Multiple practitioners report deployment failing with this error on Claude Sonnet 4.6 and Claude Opus 4.6:

{

"status": "Failed",

"error": {

"code": "ResourceOperationFailure",

"message": "The resource operation completed with terminal provisioning state 'Failed'.",

"details": [{

"code": "AnthropicOrganizationCreationFailed",

"message": "Internal Server Error."

}]

}

}The Microsoft Q&A thread tracking this failure has been open since April 12, 2026 with no public root cause. The only known workaround is to retry the deployment in East US 2 instead. If you are designing architecture today, treat Sweden Central as best-effort and assume East US 2 is your real production region. Plan data residency and latency math accordingly.

Default Quota Is Zero. Yes, Zero.

This trap costs more days of engineering time than the billing trap costs dollars.

Even on a fully eligible Enterprise Agreement subscription, in East US 2, with the Microsoft.CognitiveServices provider registered and the right RBAC, the default Claude quota is zero. Zero RPM. Zero TPM. From Microsoft’s own quota table:

| Model | Default RPM | Default TPM | Enterprise + MCA-E RPM | Enterprise + MCA-E TPM |

|---|---|---|---|---|

| claude-opus-4-7 | 0 | 0 | 2,000 | 2,000,000 |

| claude-opus-4-6 | 0 | 0 | 2,000 | 2,000,000 |

| claude-sonnet-4-6 | 0 | 0 | 2,000 | 2,000,000 |

| claude-haiku-4-5 | 0 | 0 | 4,000 | 4,000,000 |

The “Default” column is what you get from Microsoft Foundry the moment the subscription becomes eligible. Nothing. The “Enterprise and MCA-E” column is what you get after a manual quota request through the quota increase request form is approved. This means every Claude project on Azure starts with a support ticket as a hard prerequisite, not as an escalation step.

Microsoft confirmed the design intent in a public Q&A response:

Claude models are Marketplace-based offerings and quota is not auto-assigned to every subscription. Even with valid billing and accepted Marketplace terms, a subscription can show 0/0 quota if it hasn’t been explicitly enabled on the backend.

The blast radius of this is bigger than it looks. If your dev tenant has Claude working and your prod tenant does not, your CI/CD pipeline will fail silently in production with what looks like a permissions issue. The error message gives no hint that the actual problem is “your prod subscription has not been backend-enabled by a Microsoft engineer yet.”

Plan to file the quota request as the first action, on day one of the project, before any code is written.

Real Cost Math: Claude Opus 4.7 vs GPT on Azure 1P

Claude Opus 4.7 is the current production-aimed Anthropic model on Foundry, released April 16, 2026. Pricing per million tokens:

- Input: $5 per million

- Output: $25 per million

- Cache reads: roughly 10% of input rate (about 90% savings)

- Cache writes: roughly 25% premium over input

- Batch: 50% discount on async workloads

The headline rates are unchanged from Opus 4.6, but the real cost is not, because Opus 4.7 ships with a new tokenizer that can produce up to 35% more tokens for the same input text. The multiplier is 1.0x to 1.35x in practice, with the higher end most often on code, structured data, and non-English text. So a request that cost $0.10 on Opus 4.6 can cost $0.10 to $0.135 on Opus 4.7 for identical work. A team running ~$10/day on 4.6 should expect ~$10 to $13.50/day on 4.7. Across a year that is up to a $1,200 swing on a small workload, before factoring scale-out.

Compare to GPT family on Azure 1P (first-party):

| Use case (rough) | Claude Opus 4.7 on Azure (Marketplace) | GPT family on Azure OpenAI (1P) |

|---|---|---|

| 10K req/day, 8K input, 1K output | ~$190/day on Opus 4.7 (after tokenizer multiplier) | Substantially cheaper, varies by GPT tier |

| Founders Hub / startup credits cover it | No | Yes |

| Subscription required | EA or MCA-E + non-South-Korea + zero-quota ticket | Most subs work, no ticket |

| Tokenizer surprise | Up to 35% more tokens vs the same content on 4.6 | Stable across versions |

| Available regions | East US 2, Sweden Central | Many |

| Reasoning quality on hard tasks | Strongest available 4.x family for long-context coding and agentic work | Strong; Opus 4.7 typically wins on long-context and multi-hour agent runs |

The math says: pick Claude Opus 4.7 when its reasoning ceiling and 1M context actually win for the workload, and you have a paid budget that can absorb both Marketplace billing and the tokenizer multiplier. Pick GPT on Azure 1P when good-enough reasoning meets the bar, you want credits to apply, and you do not need 1M context. There is no neutral default.

For high-volume classification or routing, Claude Sonnet 4.6 ($3/$15 per million tokens) or Haiku 4.5 is usually the right call. Sonnet 4.6 has 1M context generally available. Sonnet 4.5’s 1M-context beta retires April 30, 2026, so any code still using the context-1m-2025-08-07 beta header on Sonnet 4.5 must migrate before then.

The Pre-Flight Checklist Before You Deploy Claude

Most of this article’s pain is avoidable with a 30-minute pre-flight check. Run it before you commit any project to Claude on Azure.

- Confirm subscription eligibility. Run

az account show. Verify the subscription is EA (and NOT a Korean EA) or MCA-E with a billing method. CSP, sponsored, free-trial, MSDN, and credit-only subscriptions cannot deploy Claude. - Confirm credit applicability. If you are on Founders Hub, MSDN, sponsorship, or any credit pool, assume credits do NOT apply to Claude. If a credit card sits on the same subscription, that card is charged the moment Claude runs. Either commit to paid spend or pick a 1P model.

- Pick a region. East US 2 if at all possible. Sweden Central only if data residency demands it, with a documented fallback for the AnthropicOrganizationCreationFailed error.

- File the quota ticket BEFORE writing code. Default quota is zero. Request the Enterprise/MCA-E allocation for the specific Anthropic models on the specific subscription. Day one, not when CI/CD is failing.

- Set up Azure Monitor budget alerts on Marketplace category specifically. Marketplace charges roll up under a separate billing dimension. Filter on

MeterCategorycontaining the Anthropic publisher, not just total Azure spend. - Document the procurement category to finance. Tell finance that AI workload spend will land partly in Azure billing and partly in Marketplace billing. Confirm the budget structure supports both.

- Decide the fallback model. If Anthropic gets pulled, your subscription gets reclassified, or pricing shifts, what is your path? GPT-5 on Azure 1P? Claude direct via Anthropic API? AWS Bedrock? Have an answer documented before you start.

- Plan for the 35% tokenizer multiplier. If you are migrating from Opus 4.6 to 4.7, expect costs up to 35% higher for the same inputs. Decide whether the quality gain justifies it or whether 4.6 is the better cost ceiling for your use case.

Should You Deploy Claude on Azure At All?

This is the architecture question hiding under the cost question. There are three real choices for running Claude in production right now:

Option A: Claude on Azure AI Foundry (Marketplace). Wins when Microsoft is your dominant procurement vehicle, you need Foundry orchestration, you want Claude to sit alongside GPT-5 behind the same APIM gateway, and you have EA or MCA-E billing in a supported geography. Loses on credits, regions, CSP support, and the zero-default quota ceremony.

Option B: Claude direct via Anthropic API. Wins on speed (instant access, no quota tickets), region flexibility (Anthropic has more regions than Azure does for Claude), latest model availability (Anthropic ships new Claude versions to their direct API first by days to weeks), and credits if you negotiate Anthropic startup credits separately. Loses on Microsoft procurement consolidation, Foundry-native tracing, and any compliance posture that requires “everything goes through Azure.”

Option C: Claude via AWS Bedrock or GCP Vertex. Wins for shops already on AWS or GCP, where Claude integrates with the cloud-native observability stack and Marketplace billing is a single bill from a single vendor. Loses on Microsoft integration entirely, but for organizations where Microsoft is not the primary cloud, this is often the cleanest path.

The honest answer is that most enterprises end up with Option A for production and Option B for development. Architects who pretend “Azure has Claude now, problem solved” without understanding the Marketplace mechanics will hit the same trap thirty-five founders documented in the petition.

What This Tells You About Multi-Provider AI Architecture

Take a step back. Why do these traps exist?

Because Azure is not a model factory. Azure is a procurement and compute layer that resells AI models from many parties, including Anthropic, Meta, Mistral, Cohere, Stability, and its own OpenAI partnership. The Marketplace billing model exists because each of those vendors has its own DPA, its own pricing, its own region availability, its own quota system, and its own subscription rules. Microsoft cannot absorb all of that into one billing pipeline. They can hide it behind a unified UI, which they do. They cannot make the underlying complexity disappear, no matter how seamless the catalog feels.

For architects, the practical takeaway is provider-neutral by design. The model layer of your AI architecture will always be heterogeneous. Build for that. Use APIM or your own gateway as a routing layer that can shift workloads between Claude (Azure or direct), GPT-5, Llama, and whatever ships next. Treat the question “where does this model run, on what billing pipeline, in what region, with what subscription” as a first-class architecture concern, not a procurement concern that gets handed off.

The founders who got burned were not technically wrong. Their architecture worked. Their procurement assumptions did not match procurement reality. That gap, between architecture and procurement, is exactly where senior AI architects earn their seat at the table.

Related Reading

- Logic Apps as MCP Servers: The Architecture That Actually Works - how to expose Azure resources to AI agents (any provider)

- AI Copilots vs Custom AI on Azure: Build vs Buy - when first-party Microsoft AI wins vs custom on Foundry

- Building AI Solutions on Azure: The Architecture That Actually Works - multi-component AI stack patterns

If you are architecting Claude or other multi-provider AI for a Microsoft business app stack and want a sanity check on the trap matrix above, reach out.

Stay in the loop

Get new posts delivered to your inbox. No spam, unsubscribe anytime.

Related articles

Azure OpenAI PTU vs PAYG: The Real Break-Even Table

Break-even calculators say PTU wins at 150M tokens per month. Real-world utilization breaks that math. Here is the actual table from Microsoft's PTU throughput data, with the utilization curve most architects miss.

AI Engineering Productivity ROI: Tokens vs Closed Tickets

Vendor AI dashboards count tokens. The CFO counts closed tickets. A framework with cited inputs and an illustrative ROI calculator for the AI engineering operating model.

Microsoft 365 Copilot ROI Calculator: The Honest Math

Forrester says 353% ROI. Microsoft's own RCT says 0.5 hours per week. The interactive calculator below uses RCT-grounded defaults and shows exactly which lever your business case depends on.